Ad

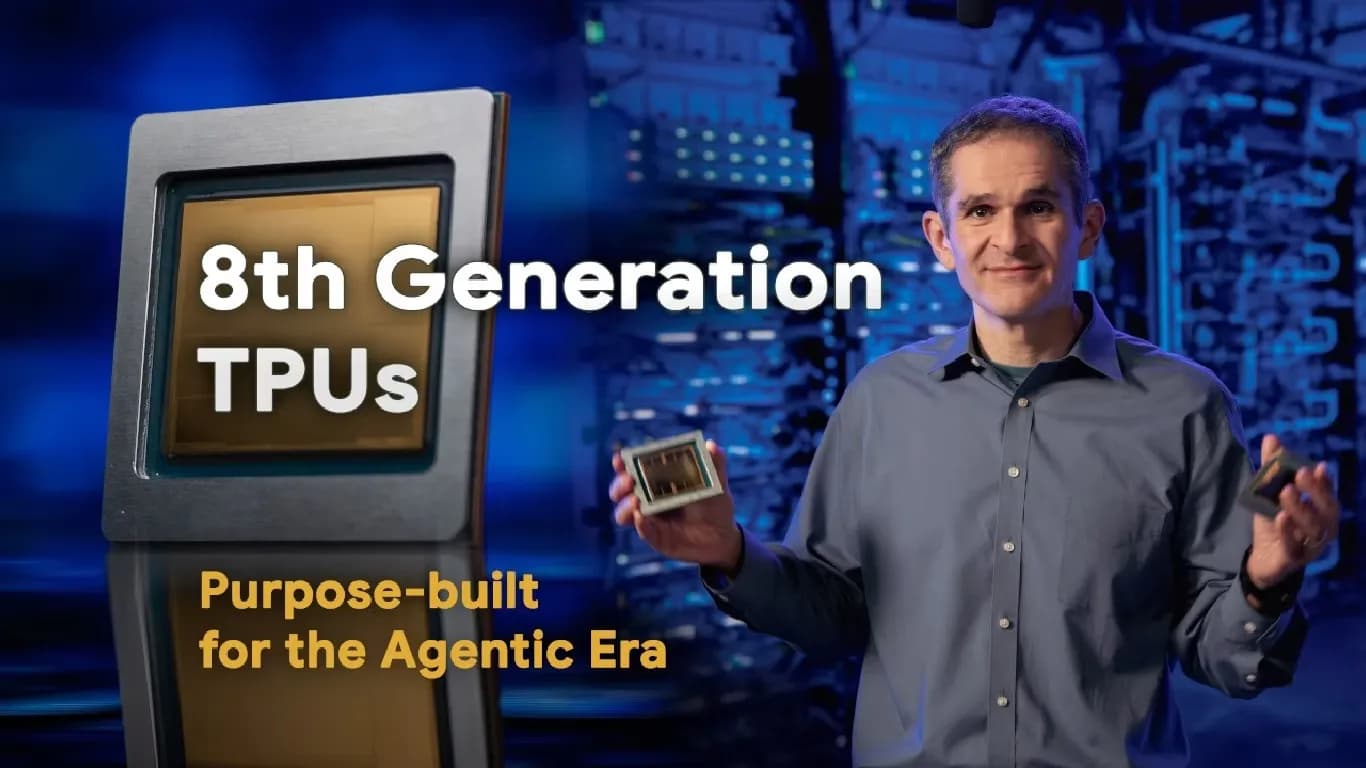

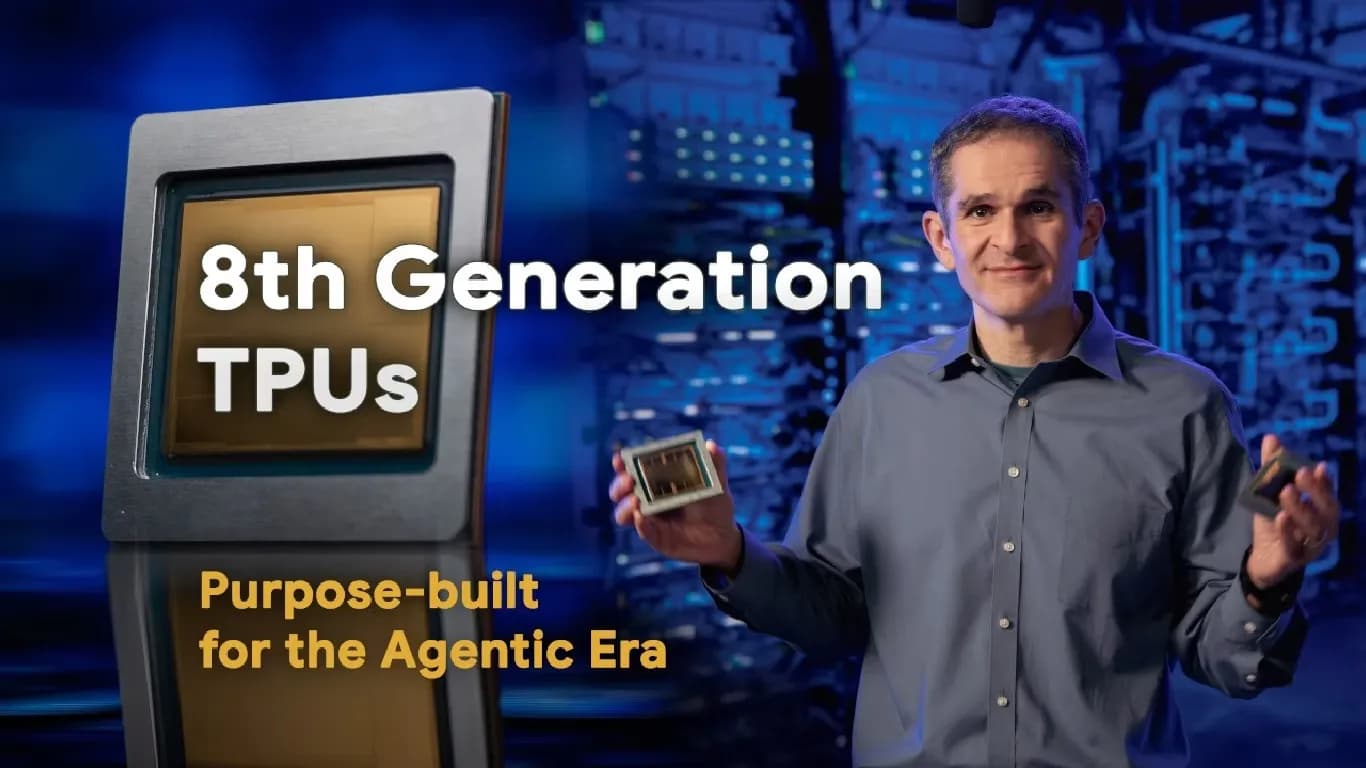

Google Unveils TPU 8t and TPU 8i Chips for Advanced AI Workloads

272 views

Follow Us:

272 views

Google has launched two new chips, the TPU 8t and TPU 8i, to address the growing demands of artificial intelligence workloads. These eighth-generation Tensor Processing Units (TPUs) are designed for use in Google’s custom-built supercomputers. Each chip serves a distinct function, with one focusing on training AI models and the other on delivering fast, efficient responses.

Key Highlights

- Google introduces TPU 8t and TPU 8i chips for advanced AI workloads

- TPU 8t focuses on faster training of large AI models with triple the previous generation's performance

- TPU 8i is designed for efficient, low-latency inference in complex, collaborative AI tasks

- Both chips use Axion ARM-based CPUs and advanced liquid cooling for performance and energy efficiency

TPU 8t and TPU 8i Overview

The TPU 8t is described by Google as a ‘training powerhouse.’ It is primarily built for training large AI models, a process that typically requires significant time and computing resources. Google claims the TPU 8t can accelerate training, reducing development cycles from months to weeks. The company states that the TPU 8t offers nearly three times the compute performance compared to the previous TPU generation.

The TPU 8i, on the other hand, is referred to as a ‘reasoning engine.’ This chip is intended for handling complex, collaborative, and iterative tasks performed by multiple specialised agents. Google highlights that the TPU 8i features increased memory bandwidth, which is essential for managing latency-sensitive inference workloads. Efficient handling of these workloads is crucial, as even minor inefficiencies can be amplified when agents interact at scale.

Technical Features and Infrastructure

Both the TPU 8t and TPU 8i operate on Google’s Axion ARM-based CPU host. They are supported by advanced liquid cooling technology, which helps maintain high performance while controlling energy consumption. Google emphasises that both chips can manage a variety of workloads, but their specialised designs provide significant efficiency and performance gains.

These chips are part of Google’s broader infrastructure, which includes purpose-built networking, data centres, and energy-efficient operations. The company states that this full-stack approach forms the foundation for delivering highly responsive agentic AI to users on a large scale.

Industry Context

As AI models grow in size and complexity, the need for specialised hardware has increased. Google’s introduction of the TPU 8t and TPU 8i aims to meet these requirements by offering improved training speeds and faster inference capabilities. The chips are expected to play a key role in supporting advanced AI applications and services.

Latest News

Reviews & Guides

View All

Samsung Galaxy S26 Ultra Review: AI से लेकर प्राइवेसी डिस्प्ले है सबसे खास, जानें कैसी है परफॉरमेंस

Vivo V70 Elite Review 2026: Price in India, Specs, Features

Asus Zenbook 14 UM3406G Review: All New Thin and Light Ai Laptop

Realme P4 Power 5G First Impressions: Massive Battery and Power

Brother MFC-J5855DW Printer Review 2026: Features, Specs, Performance

Haier H5E Series 4K Ultra HD Smart Google TV Review: Price and specification

Samsung Galaxy S26 Ultra Privacy Display Explained: How It Works

Apple iPhone 17 vs Samsung Galaxy S26: Price in India, Specifications

Should You Buy a Smart AC in India 2026? Pros, Cons, and Top Models

Window AC or Split AC: What Should You Choose in 2026?

Google Pixel 10a vs OPPO Reno 15: Which Smartphone Should You Buy in 2026?

Best Water-Efficient Washing Machines in India 2026: Top 7–9kg Models